| 3 Oct 2019 |

swedishhat swedishhat | He said I could give you his email if you wanted to throw some questions at him | 21:05:38 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 21:06:08 |

bobsmith-dpi bobsmith-dpi | To answer your question: I took the injection molding class at TechShop. So no. | 21:20:58 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 22:06:39 |

swedishhat swedishhat | https://www.xilinx.com/support/documentation/white_papers/wp505-versal-acap.pdf | 23:17:42 |

swedishhat swedishhat |

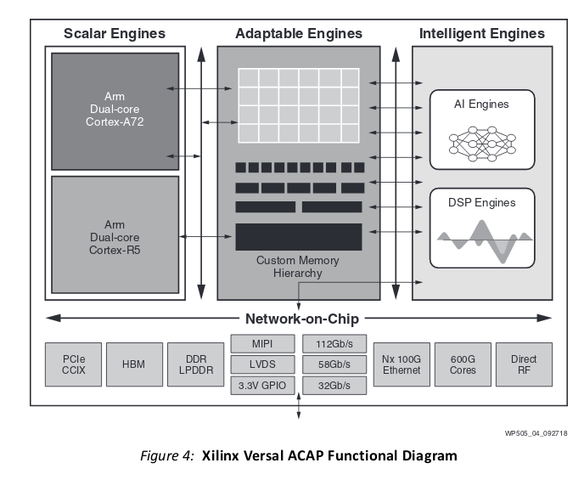

Xilinx is introducing a revolutionary new heterogeneous compute architecture, the adaptive compute acceleration platform (ACAP), which delivers the best of all three worlds—world-class vector and scalar processing elements tightly coupled to next-generation programmable logic (PL), all tied together with a high-bandwidth network-on-chip (NoC), which provides memory-mapped access to all three processing element types. This tightly coupled hybrid architecture allows more dramatic customization and performance increase than any one implementation alone

| 23:17:59 |

swedishhat swedishhat | looks like Xilinx gets it | 23:18:23 |

swedishhat swedishhat |

Download image.png | 23:19:07 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 23:19:32 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 23:20:37 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 23:20:44 |

swedishhat swedishhat | they do | 23:20:51 |

swedishhat swedishhat | https://www.xilinx.com/products/boards-and-kits/alveo.html | 23:21:15 |

swedishhat swedishhat | those are for doing datacenter FPGA acceleration. It's what AWS uses in their F1 instances | 23:21:44 |

swedishhat swedishhat | https://aws.amazon.com/ec2/instance-types/f1/ | 23:22:02 |

swedishhat swedishhat | But since the Versal is so new, I don't know if they have any datacenter products yet. But that's where it's going | 23:23:27 |

| 4 Oct 2019 |

bobsmith-dpi bobsmith-dpi | I wonder. The leader is AI right now is nVidia. This is because its GPUs have thousands of floating point units. Since neural nets are floating point, this gives nVidia a huge advantage over Xilinx. I just don't see Xilinx as a contenter in the AI arena. Sure, they'll come up with something but I bet if you look at the bang for the buck, they are close to last. | 00:09:18 |

bobsmith-dpi bobsmith-dpi | I could be wrong about Xilinx. Their Alveu 2500 has 11.5k DSP slices. If a DSP slice is floating point then it would be competitive. | 00:11:57 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 03:05:54 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 03:06:48 |

bobsmith-dpi bobsmith-dpi | You can train a NN to use only integer coefficients. It is not as good but maybe it is good enough? | 04:50:56 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 04:52:25 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 04:53:11 |

@danielle.wis:matrix.org @danielle.wis:matrix.org | Redacted or Malformed Event | 04:53:50 |

bobsmith-dpi bobsmith-dpi | There are FIR design tools that restrict the coefficients to powers of 2. That way you can build the FIR using only adders and no multipliers. | 04:58:10 |

| 9 Oct 2019 |

swedishhat swedishhat | I just went to a pretty cool talk on provisioning Yocto Linux builds for different kinds of hardware | 03:10:05 |

swedishhat swedishhat | It was about as in depth as you could expect for an hour meetup but I'll post the slides and my notes in a sec | 03:10:44 |

swedishhat swedishhat | Download amillionwaystoprovisionembeddedlinuxdevices-190821133556.pdf | 03:15:21 |

swedishhat swedishhat | The talk itself was loosely affiliated with the silicon valley linux users group (http://svlug.org/) which could be a cool avenue for getting members and giving talks. They specifically requested if anyone in the crowd wanted to give a talk at some future meeting, even if they weren't an expert | 03:21:39 |

swedishhat swedishhat | Download Embedded Linux Talk Notes.pdf | 03:22:23 |